Software delivery has compressed timelines, expanded attack surfaces, and dissolved the boundaries between development and operations. In this environment, the DevSecOps Pipeline is no longer a conceptual ideal—it is the only viable mechanism to continuously enforce security without halting delivery. Yet most organizations still treat the DevSecOps Pipeline as an extension of CI/CD, rather than as a system of control points governing how software moves from code to runtime.

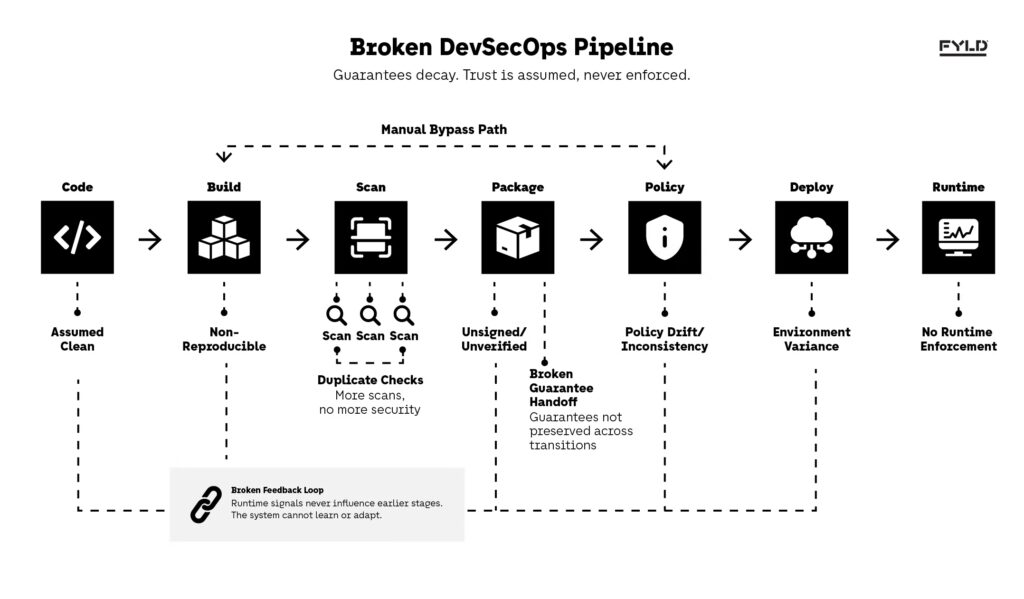

The problem is not the absence of security tools, but the absence of structure. Static analysis, dependency scanning, container checks, and runtime monitoring are often implemented in isolation, each producing signals that are rarely reconciled. This fragmentation creates a false sense of coverage: vulnerabilities are detected, but not contextualized; policies exist, but are not enforced consistently across the DevSecOps Pipeline. The result is predictable—security becomes reactive, fragmented, and ultimately bypassed under delivery pressure.

Current approaches fail because they optimize for tool adoption instead of system design. Adding more scanners into the DevSecOps Pipeline does not increase security if the pipeline itself lacks clear control boundaries. In fact, it often introduces noise, slows feedback loops, and encourages teams to selectively ignore signals. This misalignment is consistent with how modern software supply chain guidance emphasizes the need to embed security across the entire lifecycle—from design through deployment—rather than treating it as isolated checks.

What’s missing is a blueprint that treats the DevSecOps Pipeline as an architectural system, where each stage enforces specific guarantees, and where security signals are not just generated but propagated, validated, and acted upon. Instead of thinking in terms of tools, we need to think in terms of state transitions: what must be true for code to move forward, and what must be enforced once it is running.

This article breaks down the DevSecOps Pipeline from that perspective. We will define the pipeline as a system of security boundaries, decompose its stages from code scanning to runtime protection, and show how to operationalize enforcement without sacrificing delivery speed. More importantly, we will expose the tradeoffs and failure modes that emerge when these boundaries are poorly designed—and how to avoid them in real-world systems.

Defining the DevSecOps Pipeline as a System of Security Boundaries

What the DevSecOps Pipeline actually represents

The DevSecOps Pipeline is often described as a sequence of stages—build, test, deploy—but this framing hides its actual role. In practice, the DevSecOps Pipeline is a system that governs how software transitions between states, with each transition requiring explicit validation of security guarantees.

At a system level, this means the pipeline is not about automation, but about control. Every movement from source code to artifact, from artifact to deployment, and from deployment to runtime must satisfy conditions that are both verifiable and enforceable. Without these conditions, the DevSecOps Pipeline becomes a transport mechanism rather than a governance system.

The architectural implication is that security cannot be attached to stages; it must be embedded in the transitions themselves. This is consistent with how modern supply chain frameworks define integrity as a property of the entire lifecycle rather than individual checkpoints, as outlined in the SLSA framework for artifact provenance and build integrity.

Why stage-based thinking breaks down

Treating the DevSecOps Pipeline as a linear sequence introduces a critical failure mode: it assumes that once a stage passes, its guarantees persist. In reality, each transformation introduces new risk. A dependency scan at build time does not guarantee that the deployed container remains compliant after configuration changes or environment injection.

This creates a false sense of progression. Teams believe they are “secure up to deployment,” when in fact the security posture resets with each transformation. The pipeline becomes a series of disconnected validations rather than a continuous system of trust.

The tradeoff here is between simplicity and correctness. Stage-based models are easier to visualize and implement, but they collapse under scale because they cannot express cross-stage dependencies. For example, a vulnerability detected in a dependency may need to invalidate not just the build stage, but also any downstream artifacts derived from it. Without a system-level view, the DevSecOps Pipeline cannot enforce this propagation.

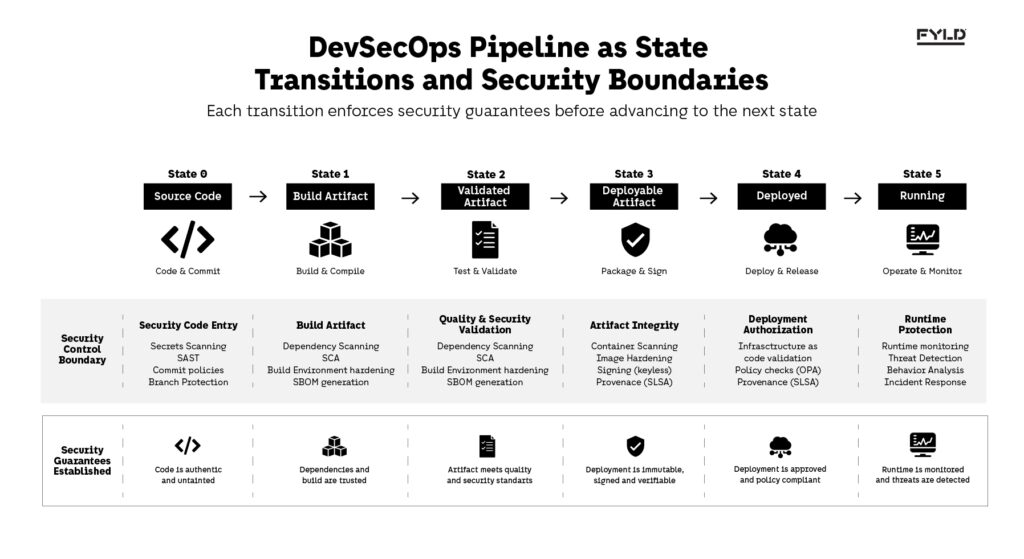

Reframing the DevSecOps Pipeline as state transitions

A more accurate model is to treat the DevSecOps Pipeline as a sequence of state transitions, where each state represents a version of the system with specific guarantees. The transition between states is only allowed if those guarantees are satisfied.

This reframing changes how controls are designed. Instead of asking “did the scan pass,” the system asks “what properties does this artifact now guarantee, and are they sufficient for the next state.” This allows security signals to become part of the artifact’s identity, not just logs attached to a stage.

The non-obvious implication is that enforcement must be persistent, not ephemeral. If a guarantee is established at build time, it must be verifiable at deploy time and enforceable at runtime. Otherwise, the DevSecOps Pipeline cannot maintain integrity across its own transitions.

Decomposing the DevSecOps Pipeline into Enforceable Security Layers

The DevSecOps Pipeline as a layered control system

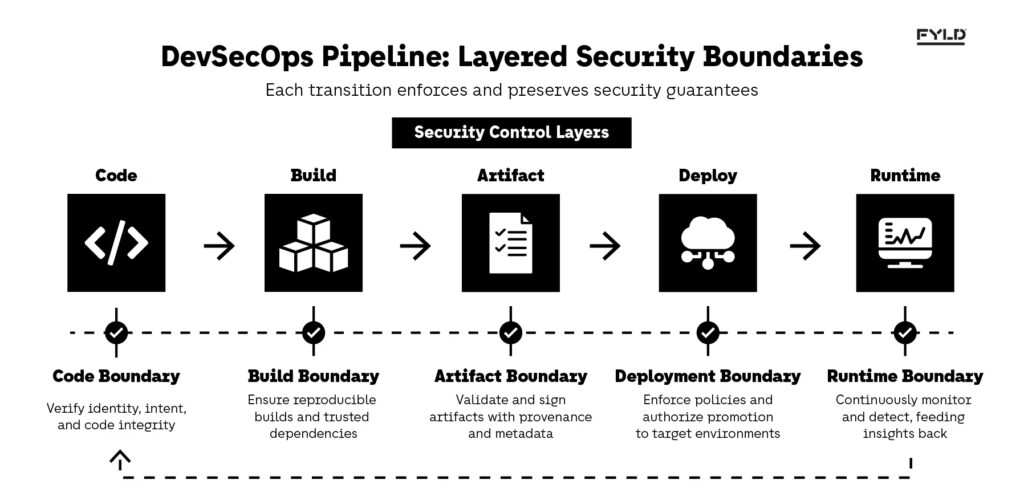

Once the DevSecOps Pipeline is understood as a system of state transitions, the next step is to decompose it into layers where each transition enforces a distinct category of guarantees. These layers are not stages in the traditional sense; they are control boundaries that define what must be validated before the system is allowed to move forward.

At a structural level, the DevSecOps Pipeline can be decomposed into five core boundaries: code entry, build integrity, artifact validation, deployment authorization, and runtime protection. Each boundary is responsible for transforming uncertainty into assurance. The pipeline, therefore, is not linear—it is cumulative. Guarantees established early must persist and be verifiable in later states.

This layered model aligns with how modern secure delivery frameworks treat software as a chain of trust, where each link must be validated and traceable. The NIST Secure Software Development Framework explicitly emphasizes this continuity, requiring security practices to be integrated across all phases rather than isolated checkpoints.

Code boundary: establishing trust at entry

The first boundary in the DevSecOps Pipeline is the point where code enters the system. This is where authenticity and intent must be established. If this boundary is weak, every downstream guarantee becomes unreliable, because the pipeline is operating on untrusted input.

This boundary typically enforces controls such as commit signing, branch protection, and secrets detection. However, the architectural implication is deeper: the pipeline must be able to assert that the code being processed is both attributable and untampered. Without this, later validations—such as dependency scanning or artifact signing—are built on unstable assumptions.

The tradeoff at this boundary is between developer velocity and entry friction. Strong controls can slow down contribution flow, especially in high-velocity teams. But weakening this boundary shifts risk downstream, where remediation becomes more expensive and less reliable. A properly designed DevSecOps Pipeline treats this boundary as non-negotiable, while optimizing automation to reduce friction rather than removing controls.

Build boundary: ensuring reproducibility and dependency integrity

Once code is accepted, the DevSecOps Pipeline transitions into the build boundary, where the system must guarantee that the resulting artifact is reproducible and its dependencies are trustworthy. This is where many teams introduce dependency scanning, but often without enforcing reproducibility as a core property.

Reproducibility is critical because it allows the system to verify that an artifact corresponds exactly to its source. Without it, the DevSecOps Pipeline cannot guarantee that the artifact being deployed is the one that was validated. Techniques such as deterministic builds and Software Bill of Materials generation become essential to maintain this integrity.

The tradeoff here lies in complexity versus traceability. Achieving reproducible builds requires stricter control over environments, which increases operational overhead. However, without it, the pipeline loses the ability to enforce integrity across transitions. A common failure mode is scanning dependencies without being able to prove that the scanned version matches what is ultimately deployed.

Artifact boundary: validating what is actually deployable

The artifact boundary is where the DevSecOps Pipeline must assert that what has been built is safe to deploy. This is distinct from build validation, because the artifact may undergo transformations such as containerization, packaging, or signing before it is considered deployable.

At this boundary, controls include container scanning, image hardening, and provenance validation. The architectural shift here is that the artifact must carry its own guarantees. Instead of relying on external logs or pipeline state, the artifact should be self-describing, embedding metadata such as signatures, SBOMs, and provenance attestations.

This approach aligns with supply chain integrity models such as in-toto, which define how artifacts can carry verifiable metadata across environments. The DevSecOps Pipeline becomes a system that not only validates artifacts but embeds trust into them.

The tradeoff is between portability and enforceability. Richly annotated artifacts are more secure, but they introduce complexity in verification and compatibility. A poorly designed pipeline validates artifacts at this stage but fails to enforce those guarantees later, effectively discarding the trust it just created.

Deployment boundary: controlling what is allowed to run

The deployment boundary governs whether an artifact is allowed to transition into a running system. This is where the DevSecOps Pipeline enforces policy decisions based on guarantees established in previous layers.

Controls at this boundary include infrastructure-as-code validation and policy enforcement systems such as Open Policy Agent. The key is that this boundary should not re-validate everything from scratch. Instead, it evaluates whether the guarantees already attached to the artifact satisfy the requirements of the target environment.

A common failure mode is duplication, where pipelines re-run scans or validations at deployment, increasing latency without improving assurance. A well-structured DevSecOps Pipeline treats deployment as a decision point, not a reprocessing step.

The tradeoff is between safety and speed. Strict enforcement increases confidence but can delay releases. Weak enforcement accelerates delivery but undermines the entire system. The balance lies in making policies deterministic and grounded in verifiable guarantees.

Runtime boundary: enforcing security beyond deployment

The final boundary in the DevSecOps Pipeline is runtime, where guarantees must hold under real-world conditions. This is where monitoring, anomaly detection, and behavioral enforcement operate continuously rather than as one-time checks.

Unlike earlier boundaries, runtime is not a discrete transition. It is an ongoing state where enforcement must adapt to changes in behavior, configuration, and threat landscape. This introduces a critical system interaction: runtime signals must feed back into earlier stages of the DevSecOps Pipeline.

Modern cloud-native security models emphasize this feedback loop, where runtime insights influence build policies, dependency selection, and deployment decisions. Without this loop, the pipeline becomes static and gradually diverges from actual risk conditions.

The tradeoff here is between observability depth and operational overhead. Deeper monitoring improves detection but increases cost and system complexity. A mature DevSecOps Pipeline integrates runtime feedback selectively, ensuring that signals lead to actionable changes rather than noise.

With the DevSecOps Pipeline decomposed into enforceable layers, the next step is to operationalize these boundaries—defining how guarantees are implemented, propagated, and enforced in systems that must scale without collapsing under their own complexity.

Operationalizing the DevSecOps Pipeline: From Guarantees to Enforcement

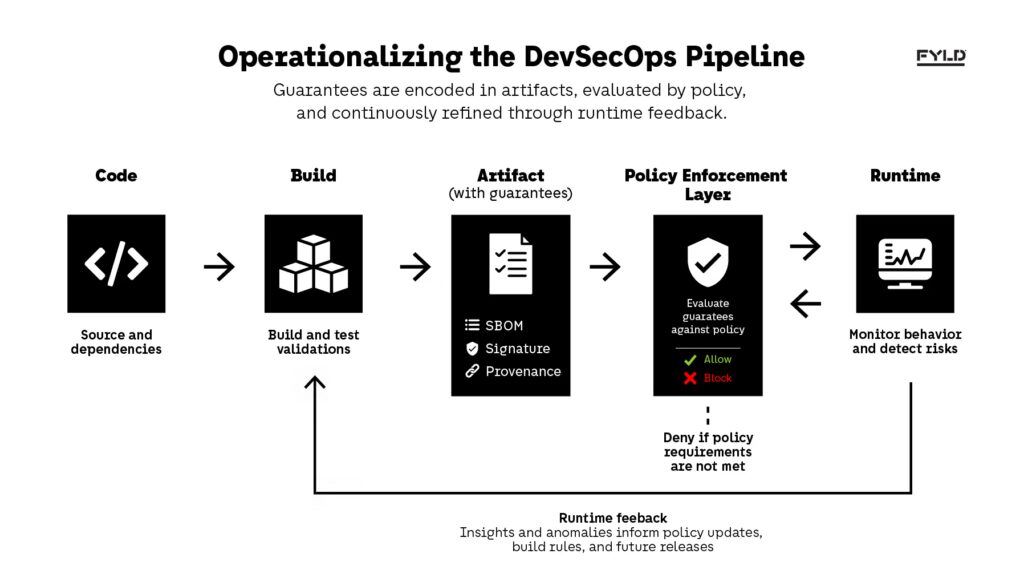

Turning guarantees into enforceable system properties

Defining boundaries in the DevSecOps Pipeline is only meaningful if the guarantees established at each transition can be enforced beyond the moment they are created. Most implementations fail at this step: they generate signals—scan results, test outcomes, compliance checks—but those signals remain local to the stage where they were produced.

Operationalizing the DevSecOps Pipeline requires transforming those signals into system properties that persist across transitions. This is where automation becomes critical—not as an efficiency tool, but as the enforcement layer itself. In practice, this shift is what separates pipelines that depend on human intervention from those that enforce security deterministically. Real-world DevSecOps automation patterns, such as secret detection, dependency policy enforcement, and infrastructure validation, demonstrate how these guarantees can be enforced consistently at the system level rather than relying on manual checks.

This persistence changes the role of the pipeline entirely. Instead of observing whether something passed at a given moment, the DevSecOps Pipeline becomes responsible for maintaining and validating guarantees across its entire lifecycle. The architectural implication is that enforcement must be durable, not ephemeral. If a guarantee cannot be verified at a later stage, it cannot be trusted, regardless of whether it passed earlier validation.

The tradeoff here is between simplicity and durability. It is easier to rely on pipeline logs and stage-level outputs, but doing so prevents guarantees from being enforced later. A mature DevSecOps Pipeline accepts the added complexity of encoding and enforcing guarantees in exchange for system-wide consistency and trust.

This model clarifies that the DevSecOps Pipeline does not enforce security through isolated checks, but through decisions driven by guarantees that persist with the artifact and evolve based on runtime feedback.

Encoding guarantees into artifacts

Once guarantees are defined, they must be embedded into the artifacts that move through the DevSecOps Pipeline. This is the only way to ensure that validation is not lost between stages. Instead of treating artifacts as passive outputs, the system must treat them as carriers of security context.

This typically involves attaching structured metadata such as Software Bill of Materials, cryptographic signatures, and provenance attestations. The key is that these elements are not optional—they define what the artifact is allowed to do in subsequent transitions. An unsigned artifact, or one without traceable provenance, should not be considered equivalent to one that carries verifiable guarantees.

The architectural implication is that artifact storage becomes part of the trust model. Registries and repositories must preserve metadata integrity and expose it for verification. If the DevSecOps Pipeline cannot reliably retrieve and validate this information, the guarantees effectively disappear.

The tradeoff is between portability and control. Richly annotated artifacts may introduce compatibility challenges across environments, but without this metadata, the system cannot enforce policies based on prior validation. Many pipelines fail here by generating SBOMs or signatures but never using them as decision inputs.

Enforcing policy as a decision layer

Once guarantees are encoded and transported, the DevSecOps Pipeline must introduce a decision layer that determines whether a transition is allowed. This is where policy enforcement becomes central—not as an additional check, but as the mechanism that governs progression.

Policy engines evaluate whether the guarantees attached to an artifact satisfy predefined conditions. These conditions may include dependency risk thresholds, signature verification, compliance rules, or environment-specific constraints. The key is that policy evaluation must be deterministic and based on verifiable inputs, not manual interpretation.

This approach aligns with policy-as-code models such as Open Policy Agent, where rules are expressed declaratively and evaluated consistently across environments. The DevSecOps Pipeline, in this sense, becomes a system where every transition is a controlled decision rather than an automatic progression.

The failure mode here is fragmentation. If policies are enforced differently across stages or environments, the pipeline loses coherence. A decision made at deployment must reflect the same guarantees validated at build time. Otherwise, enforcement becomes inconsistent, and trust breaks down.

Propagating signals across the pipeline

Even with encoded guarantees and policy enforcement, the DevSecOps Pipeline must ensure that signals propagate correctly across its boundaries. This is where many systems degrade: signals are generated and even embedded, but they are not consistently consumed.

Propagation means that each boundary must not only validate new conditions but also re-evaluate existing guarantees in the context of the next state. For example, a vulnerability discovered after build time should invalidate not only future deployments but also any currently deployed instances derived from the affected artifact.

This introduces a system interaction between stages that is often overlooked. The pipeline is not strictly forward-moving; it must support backward influence. A runtime signal may need to trigger rebuilds, policy updates, or artifact revocation. Without this feedback capability, the DevSecOps Pipeline becomes static and eventually diverges from real-world risk.

The tradeoff is between responsiveness and stability. Continuous propagation of signals can introduce churn, especially in large systems. However, limiting propagation reduces the system’s ability to react to emerging threats. The balance lies in defining which signals are authoritative and how far their impact should extend.

Integrating runtime feedback into earlier boundaries

The final step in operationalizing the DevSecOps Pipeline is closing the loop between runtime and earlier stages. Runtime is where assumptions are tested against reality, and it is the only place where certain classes of risk become visible.

This means that runtime signals must not remain isolated in monitoring systems. They must influence how earlier boundaries operate. For example, repeated runtime anomalies linked to a specific dependency may require tightening build policies or updating dependency selection criteria.

Modern cloud-native security practices emphasize this feedback loop as a core capability, where runtime insights continuously refine build-time and deployment-time controls. Without this loop, the DevSecOps Pipeline cannot evolve—it can only enforce static rules.

The tradeoff here is complexity versus adaptability. Integrating runtime feedback requires coordination across systems that are often owned by different teams. However, without this integration, the pipeline remains blind to the effectiveness of its own controls.

Scaling the DevSecOps Pipeline Across Teams and Systems

Why the DevSecOps Pipeline breaks at scale

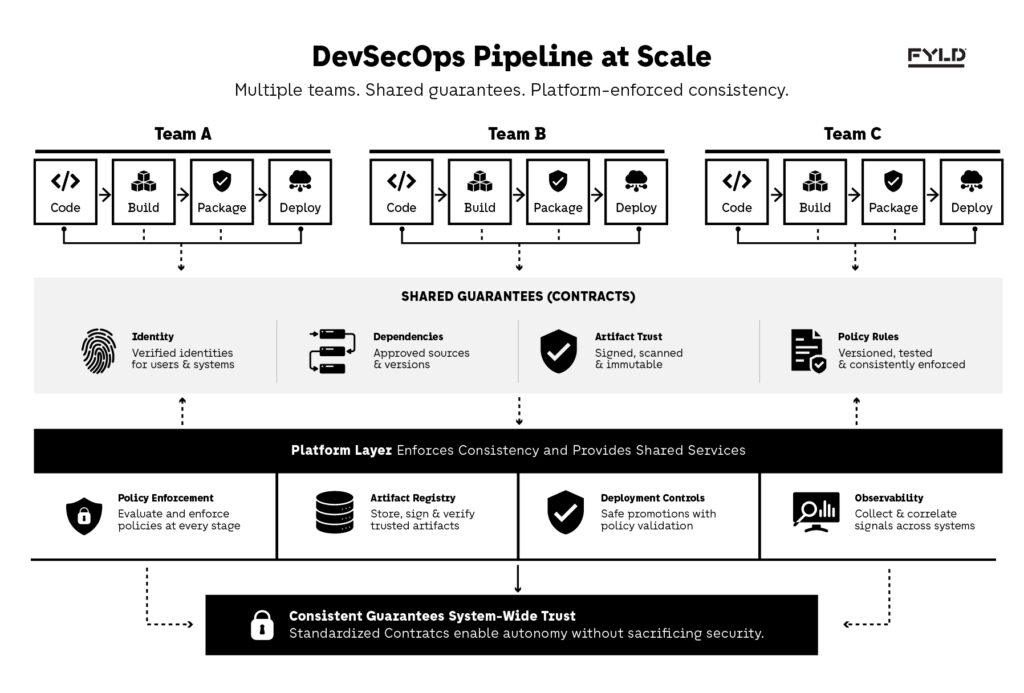

The DevSecOps Pipeline is relatively straightforward to design in isolation. A single team can define boundaries, enforce guarantees, and maintain consistency across its delivery flow. The difficulty emerges when that same model must operate across multiple teams, services, and environments.

At scale, the DevSecOps Pipeline stops being a single system and becomes a collection of interconnected pipelines, each with its own assumptions, tooling, and enforcement logic. Without coordination, these pipelines diverge. Guarantees are defined differently, policies are inconsistently enforced, and trust becomes localized rather than systemic. This fragmentation is not a tooling problem but a structural one, where local optimizations undermine system-wide integrity.

Scaling the DevSecOps Pipeline is not about replicating pipelines across teams, but about enforcing consistent guarantees through shared systems that each team can rely on without losing autonomy.

This pattern is consistent with how platform engineering is evolving. Organizations increasingly adopt new capabilities and automation practices, yet struggle to translate them into consistent, system-level outcomes. Recent research highlights that widespread adoption does not automatically produce organizational value when systems lack shared structures and enforcement models across teams.

The tradeoff here is between autonomy and consistency. Teams need flexibility to move quickly, but the DevSecOps Pipeline depends on shared guarantees. Without a common model, the pipeline loses its ability to enforce trust across organizational boundaries, and security becomes fragmented in the same way delivery often does at scale.

Standardizing guarantees without centralizing control

To scale the DevSecOps Pipeline, organizations must standardize what guarantees mean without dictating how every team implements them. This requires defining contracts rather than prescribing tools.

For example, instead of mandating a specific dependency scanner, the system defines what constitutes an acceptable dependency profile. Teams are free to choose how they achieve that state, as long as the resulting guarantees are verifiable and consistent.

This approach introduces a separation between implementation and validation. The DevSecOps Pipeline enforces outcomes, not methods. Guarantees become part of a shared language that allows different teams to interoperate without tightly coupling their internal processes.

The tradeoff is increased upfront design complexity. Defining clear, enforceable contracts requires alignment across teams and disciplines. However, without this abstraction, scaling the pipeline leads to fragmentation, where each team effectively operates its own version of security.

Platform engineering as the enforcement layer

At an organizational scale, the DevSecOps Pipeline is no longer owned by individual teams. It becomes part of a platform that provides shared capabilities for building, validating, and deploying software.

Platform engineering plays a critical role here by embedding enforcement into the infrastructure itself. Instead of relying on teams to implement controls, the platform ensures that certain guarantees are automatically enforced at key boundaries.

This may include:

- centralized artifact registries that enforce signing and provenance

- deployment systems that require policy validation before promotion

- runtime platforms that enforce baseline security configurations

The architectural implication is that the DevSecOps Pipeline becomes partially implicit. Teams interact with it through platform interfaces, but enforcement happens beneath the surface.

The tradeoff is between abstraction and visibility. Platform-driven enforcement reduces inconsistency, but it can obscure how guarantees are applied. If teams cannot understand or verify the system, they may attempt to bypass it, reintroducing risk.

Managing policy drift across environments

As systems scale, policies inevitably diverge. What is acceptable in development may not be acceptable in production. Different regions, compliance requirements, and risk tolerances introduce variation that the DevSecOps Pipeline must accommodate.

The challenge is ensuring that this variation does not break the underlying guarantee model. Policies can differ, but they must operate on the same inputs and produce consistent types of decisions.

This is where policy-as-code becomes essential. By defining policies declaratively and versioning them alongside code, the DevSecOps Pipeline can maintain consistency while allowing controlled variation. The Open Policy Agent ecosystem provides a strong foundation for managing policy across environments without duplicating logic.

The tradeoff is complexity versus flexibility. Supporting multiple policy contexts increases system complexity, but enforcing a single global policy is rarely practical. The solution lies in layering policies, where global guarantees are enforced universally and environment-specific rules are applied on top.

Preserving feedback loops at scale

One of the first capabilities to degrade at scale is the feedback loop between runtime and earlier stages of the DevSecOps Pipeline. As systems grow, the distance between teams increases, and runtime signals often fail to reach the people or systems that can act on them.

This breaks one of the core properties established earlier: the pipeline must adapt based on real-world behavior. Without feedback, guarantees become static assumptions rather than continuously validated truths.

Scaling the DevSecOps Pipeline requires making feedback a first-class concern. This means:

- routing runtime signals into shared systems

- correlating those signals with artifacts and builds

- triggering automated responses where appropriate

The tradeoff is between signal fidelity and noise. Large systems generate vast amounts of runtime data, and not all of it is actionable. The pipeline must filter and prioritize signals to ensure that feedback leads to meaningful changes rather than alert fatigue.

With the DevSecOps Pipeline operating across teams, platforms, and environments, the system becomes more powerful—but also more fragile. The next section examines what happens when these mechanisms fail, and how to recognize and correct breakdowns before they compromise the entire pipeline.

Where DevSecOps Pipelines Fail: Hidden Breakdowns in System Integrity

When the DevSecOps Pipeline appears correct but isn’t

By the time a DevSecOps Pipeline reaches maturity, most of its components appear to be in place. Code is scanned, artifacts are built and signed, policies are enforced, and runtime is monitored. On the surface, the system looks complete. The failure is not in missing controls, but in how those controls relate to each other.

The most dangerous failure mode is structural invisibility. The DevSecOps Pipeline continues to execute, signals are generated, and deployments proceed, yet the guarantees established earlier no longer hold. This happens when validation is treated as a one-time event instead of a persistent property. A passed scan becomes a historical artifact, not an enforceable constraint.

This creates a system that looks secure but behaves inconsistently. The pipeline still produces outputs, but it no longer enforces trust. In this state, security becomes ceremonial—present in process, absent in outcome.

Guarantee decay across transitions

One of the most common breakdowns in the DevSecOps Pipeline is the silent decay of guarantees as the system transitions between states. A guarantee established at build time may not survive packaging, deployment, or runtime mutation.

For example, an artifact may pass dependency validation during the build stage, but later be modified through configuration injection or environment-specific overrides. If the pipeline does not re-verify or enforce the original guarantee, the system operates under assumptions that are no longer true.

The non-obvious implication is that guarantees are not inherently durable. They must be actively preserved and revalidated. Without this, the DevSecOps Pipeline becomes a chain of loosely connected validations rather than a continuous system of trust.

The tradeoff here is between performance and correctness. Continuous verification introduces overhead, but without it, the system cannot ensure that earlier guarantees remain valid. Many pipelines choose performance, unintentionally allowing guarantees to drift over time.

Duplicate validation without enforcement

Another failure mode is the illusion of security created by repeated validation. Many implementations of the DevSecOps Pipeline re-run the same scans at multiple stages—build, deploy, and even runtime—under the assumption that more checks increase safety.

In reality, duplicate validation without enforcement does not improve security. It introduces noise, increases latency, and creates ambiguity about which result is authoritative. Pipelines can become highly automated and still fail to enforce meaningful guarantees, as automation alone does not ensure that validation results are consistently applied or acted upon.

If a vulnerability is detected at deployment that was already detected at build time, the system has failed to enforce its own guarantees. This reflects a deeper issue: validation is being treated as a diagnostic activity rather than a control mechanism. The DevSecOps Pipeline should not be asking the same question multiple times; it should be ensuring that once a guarantee is established, it is enforced consistently across all transitions.

The tradeoff is between visibility and clarity. Repeated checks provide more data, but without a clear enforcement model, that data does not translate into stronger security.

Policy fragmentation across environments

As the DevSecOps Pipeline scales across environments, policies often diverge. Development, staging, and production environments introduce different constraints, leading to variations in enforcement logic.

This fragmentation creates a situation where the same artifact may be considered valid in one environment and invalid in another, without a clear explanation of why. The pipeline no longer represents a consistent system of guarantees, but a set of context-dependent rules.

The architectural implication is that policies must operate on a shared foundation, even when they differ in strictness. Without this, the DevSecOps Pipeline loses coherence, and teams cannot reason about what guarantees actually mean. The tradeoff here is between flexibility and predictability. Allowing policy variation supports real-world requirements, but without a consistent model, it introduces uncertainty into the system.

Broken feedback loops from runtime to build

The final and often most critical failure mode is the breakdown of feedback loops. Runtime signals—anomalies, incidents, unexpected behavior—are generated continuously, but they rarely influence earlier stages of the DevSecOps Pipeline.

When feedback loops are broken, the pipeline becomes static. It continues to enforce the same guarantees, even when those guarantees no longer reflect actual risk. Over time, this divergence grows, and the system becomes increasingly disconnected from reality.

This is not a tooling problem, but a system design issue. The DevSecOps Pipeline must be capable of incorporating runtime insights into build policies, dependency selection, and deployment rules. Without this, it cannot adapt.

The tradeoff is between stability and responsiveness. Integrating feedback introduces change into the system, which can be disruptive. However, ignoring feedback leads to stagnation, where the pipeline enforces outdated assumptions.

Positioning the DevSecOps Pipeline as a System of Organizational Trust

From delivery mechanism to control system

At a certain level of maturity, the DevSecOps Pipeline stops being an implementation detail of software delivery and becomes a defining element of how an organization manages risk, trust, and change. What began as a way to automate build and deployment evolves into a system that governs what is allowed to exist in production.

This shift is subtle but fundamental. A pipeline that only moves code is operational. A DevSecOps Pipeline that enforces guarantees across transitions becomes a control system. It defines the conditions under which software is considered trustworthy, and more importantly, ensures that those conditions are continuously validated.

The architectural implication is that the DevSecOps Pipeline is no longer owned solely by engineering teams. It becomes part of the organization’s governance model, influencing how decisions are made about releases, dependencies, and runtime behavior. This reframing aligns with broader software supply chain security models, where integrity is treated as a system-wide property rather than a stage-level concern.

Trust as a property of the system, not the team

In early-stage environments, trust is often implicit. Teams rely on experience, familiarity with codebases, and informal validation processes. As systems grow, this model breaks down. Trust must become explicit, verifiable, and independent of individual teams.

The DevSecOps Pipeline enables this transition by embedding trust into the system itself. Guarantees are no longer based on who made a change or which team owns a service, but on whether the system can verify that required conditions are met. This reduces reliance on tribal knowledge and creates a shared foundation for decision-making.

The non-obvious benefit is that trust becomes portable. An artifact that carries verifiable guarantees can move across environments and teams without losing its integrity. This is what allows large organizations to scale safely—trust is no longer recreated at each boundary, but preserved and validated across the entire DevSecOps Pipeline.

The tradeoff is a shift in responsibility. Teams must adapt to working within constraints defined by the system. While this can initially feel restrictive, it ultimately reduces uncertainty and enables more predictable outcomes.

The DevSecOps Pipeline as a platform capability

As the DevSecOps Pipeline matures, it converges with platform engineering. The pipeline is no longer a series of scripts or tools, but a capability exposed through the platform. Teams interact with it through interfaces, while enforcement, validation, and policy decisions are handled centrally.

This convergence is not accidental. Platform engineering emerges as a response to the same scaling challenges that shape the DevSecOps Pipeline. As discussed in how internal developer platforms evolve to reduce fragmentation and improve consistency, organizations move from ad-hoc pipelines to structured systems that standardize how software is built and delivered.

The DevSecOps Pipeline, in this context, becomes the backbone of that platform. It provides the mechanisms through which guarantees are enforced and trust is maintained. Rather than each team implementing its own version of security and delivery, the platform ensures that these concerns are handled consistently across the organization.

The tradeoff is between flexibility and standardization. A platform-driven pipeline reduces inconsistency but requires careful design to avoid becoming overly rigid. The goal is not to constrain teams, but to provide a reliable system they can build upon.

Designing for continuous verification, not static compliance

A common misconception is that a well-designed DevSecOps Pipeline leads to compliance as an end state. In reality, compliance is transient. Systems change, dependencies evolve, and runtime conditions introduce new risks.

The DevSecOps Pipeline must therefore be designed for continuous verification. Guarantees are not established once and assumed to hold indefinitely; they are continuously evaluated as the system evolves. This is where the integration of runtime feedback becomes critical, ensuring that the pipeline adapts based on real-world behavior.

This perspective aligns with modern zero trust principles, where no component is inherently trusted and verification is continuous rather than assumed. This approach shifts the focus from passing checks to maintaining system integrity over time. It aligns security with the dynamic nature of modern software, where change is constant and assumptions must be validated continuously.

The tradeoff is increased system complexity. Continuous verification requires coordination across multiple layers of the pipeline. However, without it, the system becomes static and gradually diverges from reality.

A forward-looking model for software delivery

The DevSecOps Pipeline represents more than a set of practices—it defines how organizations manage the lifecycle of trust in software systems. As delivery accelerates and systems become more distributed, the ability to enforce guarantees consistently becomes a competitive advantage.

Organizations that treat the DevSecOps Pipeline as a control system gain the ability to move quickly without sacrificing integrity. They can scale teams, introduce new services, and adapt to changing requirements while maintaining a consistent model of trust.

Looking forward, the evolution of the DevSecOps Pipeline will be shaped by how well it integrates with broader system design. It will extend beyond CI/CD, influencing areas such as supply chain security, runtime governance, and platform engineering. The pipeline becomes not just a path from code to production, but a system that defines what production is allowed to be.

In this sense, the DevSecOps Pipeline is not a feature of modern engineering—it is the infrastructure through which trust is built, enforced, and sustained.